The following is the first chapter from my upcoming book Cloud Native Go (O’Reilly Press). It is still raw and largely unedited. Constructive feedback is welcome.

The most dangerous phrase in the language is, “We’ve always done it this way.”

– Grace Hopper, Computerworld (January 1976) 1

If you’re reading this book, you’ve no doubt at least heard the term “cloud native”. More likely you’ve probably seen some of the many, many articles, written by vendors bubbling over with breathless adoration and dollar signs in their eyes. If this is the bulk of your experience with the term so far then you can be forgiven for thinking the term to be ambiguous and buzzwordy, just another of a series of markety expressions that might have started as something useful but have since been taken over by people trying to sell you something. See also: Agile, DevOps.

For similar reasons a web search for “cloud native definition” might lead you to think that all an application needs to be cloud native is to be written in the “right” language2 or framework, or to use the “right” technology. Certainly, your choice of language can make your life significantly easier or harder3, but it’s neither necessary nor sufficient for making an application cloud native.

So is “cloud native” just a matter of where an application runs? The term “cloud native” certainly suggests that. All you’d need to do is pour your kludgy old application into a container and run it in Kubernetes, and you’re cloud native now, right? Nope. All you’ve done is make your application harder to deploy and harder to manage4. A kludgy application in Kubernetes is still kludgy.

The Story So Far

The story of networked applications is the story of the pressure to scale.

In the beginning, there were mainframes. Every program and piece of data was stored in a single giant machine that users could access by means of dumb terminals with no computational ability of their own. All the logic and all the data all lived together as one big happy monolith. It was a simpler time.

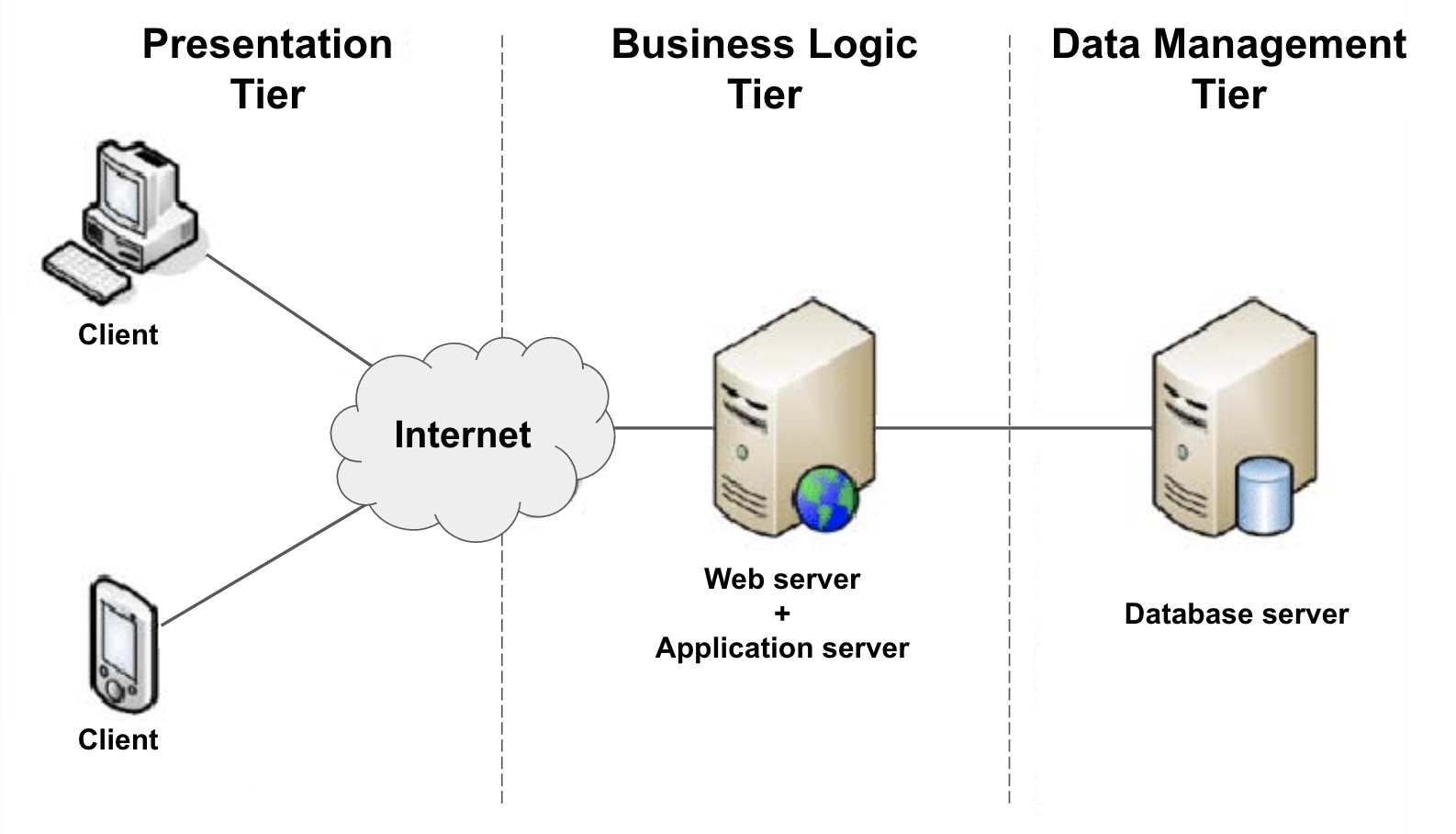

Everything changed in the 1980s with the arrival of inexpensive network-connected PCs. Unlike dumb terminals, PCs were able to do some computation of their own, making it possible to offload some of an application’s logic onto them. This new “multi-tiered” architecture – which separated presentation logic, business logic, and data (see image below) – made it possible for the first time for the components of a networked application to be modified or replaced independent of the others.

In the 1990’s the popularization of the World Wide Web and the subsequent “dot-com” gold rush introduced the world to the software-as-a-service (SaaS). Entire industries were built on the SaaS model, driving the development of more complex and resource-hungry applications, which were in turn harder to develop, maintain, and deploy. Suddenly the classic multi-tiered architecture wasn’t enough anymore. In response, business logic started to get decomposed into sub-components that could be developed, maintained, and deployed independently, ushering in the age of microservices.

In 2006 Amazon launched Amazon Web Services (AWS), which included the Elastic Compute Cloud (EC2) service. Although AWS wasn’t the first infrastructure-as-a-service (IaaS) offering, it revolutionized the on-demand availability of data storage and computing resources, bringing Cloud Computing – and the ability to quickly scale – to the masses, catalyzing a massive migration of resources into “the cloud”.

Unfortunately, organizations soon learned that life at scale isn’t easy. Bad things happen, and when you’re working with hundreds or thousands (or more!) of resources, bad things happen a lot. Traffic will wildly spike up or down, essential hardware will fail, upstream dependencies will become suddenly and inexplicably inaccessible. Even if nothing goes wrong for a while, you still have to deploy and manage all of these resources. At this scale, it’s impossible (or at least wildly impractical) for humans to keep up with all of these issues manually.

Upstream and Downstream Dependencies

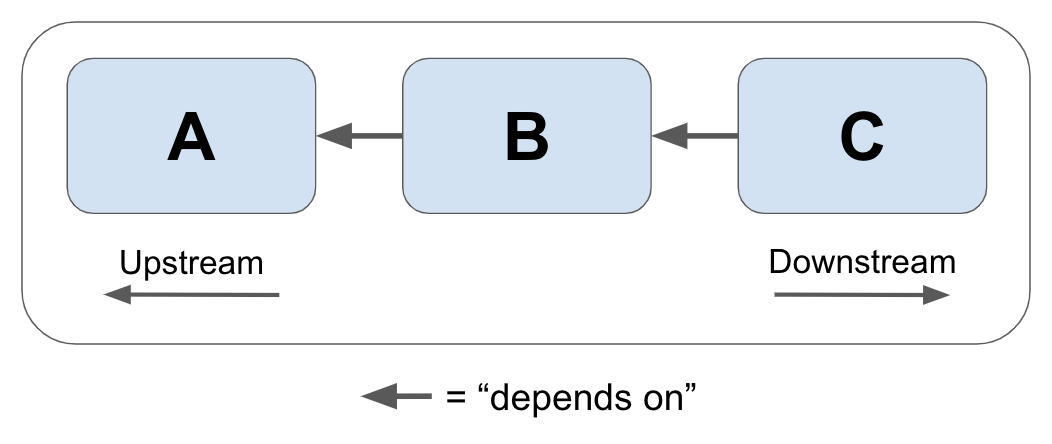

In this book we’ll sometimes use the terms “upstream dependency” and “downstream dependency” to describe the relative positions of two resources in a dependency relationship. To illustrate, imagine that we have three services: A, B, and C, as shown below.

In this scenario, Service C depends on Service B, which in turn depends on service A.

Because Service B depends on Service A, we can say that Service A is an upstream dependency of Service B. By extension, because Service C depends on Service B which depends on Service A, Service A is also a transitive upstream dependency of Service C.

Inversely, because Service A is depended upon by Service B, we can say that Service B is a downstream dependency of Service A, and that Service C is a transitive downstream dependency of Service A.

What Is Cloud Native?

Fundamentally, a truly cloud native application incorporates everything we’ve learned about running networked applications at scale over the past 60 years. They are scalable in the face of wildly-changing load, resilient in the face of environmental uncertainty, and manageable in the face of ever-changing requirements. In other words, a cloud native application is built for life in a cruel, uncertain universe.

But how do we define the term “cloud native”? Fortunately for all of us5, we don’t have to. The Cloud Native Computing Foundation, a sub-foundation of the renowned Linux Foundation and something of an acknowledged authority on the subject, has already done it for us:

Cloud native technologies empower organizations to build and run scalable applications in modern, dynamic environments such as public, private, and hybrid clouds…. These techniques enable loosely coupled systems that are resilient, manageable, and observable. Combined with robust automation, they allow engineers to make high-impact changes frequently and predictably with minimal toil.

– Cloud Native Computing Foundation, CNCF Cloud Native Definition v1.06

According to this definition, Cloud native applications aren’t just applications that happen to live in a cloud. They’re also scalable, loosely coupled, resilient, manageable, and observable. Taken together, these attributes, these “cloud native virtues”, constitute the foundation of what it means for a system to be “cloud native”.

As it turns out, each of those words have pretty specific meaning of their own, so let’s break them down.

Scalability

In the context of cloud computing, scalability can be defined as the ability of a system to continue to provide correct service in the face of significant changes in demand. A system can be considered to be scalable if it doesn’t need to be redesigned to perform its intended function during or after a steep increase in demand.

Because unscalable services can seem to function perfectly well under initial conditions, scalability isn’t always a consideration during service design. While this might be fine in the short term, services that aren’t capable of growing much beyond their original expectations also have a limited lifetime value. What’s more, it’s often fiendishly difficult to refactor a service for scalability, so building with it in mind can save both time and money in the long run.

There are two different ways that a service can be scaled, each with its own associated pros and cons:

-

Vertical scaling: A system can be vertically scaled (or scaled up) by up-sizing (or down-sizing) the hardware resources that are already allocated to it. For example, by adding memory or CPU to a database that’s running on a dedicated computing instance. Vertical scaling has the benefit of being technically relatively straight-forward, but any given instance can only be up-sized so much.

-

Horizontal scaling: A system can be horizontally scaled (or scaled out) by adding (or removing) service instances. For example, by increasing the number of service nodes behind a load balancer or containers in Kubernetes or other container orchestration system. This strategy has a number of advantages, including redundancy and freedom from the limits of available instance sizes. However, more replicas means greater design and management complexity, and not all services can be horizontally scaled.

Given that there are two ways scaling a service – up or out – does that mean that any service whose hardware can be up-scaled is “scalable”? If you want to split hairs, then sure, to a point. But how scalable is it? Vertical scaling is inherently limited by the size of available computing resources, so in actuality a service that can only be scaled up isn’t very scalable at all. If you want to be able to scale by ten times, or a hundred, or a thousand, your service really has to be horizontally scalable.

So what’s the difference between a service that’s horizontally scalable and one that’s not? It all boils down to one thing: state. A service that doesn’t maintain any application state – or which has been very carefully designed to distribute its state between service replicas – will be relatively straight-forward to scale out. For any other application, it will be hard. It’s that simple.

The concept of scalability will be discussed in much more depth in Chapter 7.

Loose coupling

Loose coupling is a system property and design strategy in which a system’s components have minimal knowledge of any other components. Two systems can be said to be loosely coupled when changes to one component generally don’t require changes to the other.

For example, web servers and web browsers can be considered to be loosely coupled: servers can be updated or even completely replaced without affecting our browsers at all. In their case, this is possible because standard web servers have agreed that they would communicate using a set of standard protocols7. In other words, they provide a service contract. Imagine the chaos if all the world’s web browsers had to be updated each time Nginx or Httpd had a new version8!

It could be said that “loose coupling” is just a restatement of the whole point of microservice architectures: to partition components so that changes in one don’t necessarily affect another. This might even be true. However, this principle is often neglected, and bears repeating. The benefits of loose coupling – and the consequences if it’s neglected – cannot be understated. It’s very easy to create a “worst of all worlds” system that pairs the management and complexity overhead of having multiple services with the dependencies and entanglements of a monolithic system: the dreaded distributed monolith.

Unfortunately, there’s no magic technology or protocol that can keep your services from being tightly coupled. Any data exchange format can be misused. There are, however, several that help, and – when applied with practices like declarative APIs and good versioning hygeine – can be used to create services that are both loosely-coupled and modifiable.

These technologies and practices will be discussed and demonstrated in detail in Chapter 8.

Resilience

Resilience (roughly synonymous with fault tolerance) is a measure of how well a system withstands and recovers from errors and faults. A system can be considered resilient if it can continue operating correctly – possibly at a reduced level – rather than failing completely when some part of the system fails.

Resilience Is Not Reliability

The terms reliability and resilience describe closely-related concepts, and are often confused. But, as we’ll discuss in Chapter 6, they aren’t quite the same thing.

-

The reliability of a system is its ability to provide correct service for a given time interval. Reliability, in conjunction with attributes like availability and maintainability, contributes to a system’s overall dependability.

-

The resilience of a system is the degree to which it can continue to operate correctly in the face of errors and faults. Resilience, along with the other four cloud-native properties, is just one factor that contributes to reliability.

We will discuss these and other attributes some more detail in Chapter 6. If you’re interested in a complete academic treatment, however, I highly recommend Reliability and Availability Engineering by Kishor S. Trivedi and Andrea Bobbio.

When we discuss resilience, and other attributes as well, but especially when we discuss resilience, we use the word “system” a lot. A “system”, depending on how it’s used, can refer to anything from a complex web of interconnected services (such as an entire distributed application), to a collection of closely-related components (such as the replicas of a single function or service instance), or a single process running on a single machine. Every system is composed of several subsystems, which in turn are composed of sub-subsystems, which are themselves composed of sub-sub-subsystems. It’s turtles all the way down.

In the language of systems engineering, any system can contain defects, or faults, which we lovingly refer to as “bugs” in the software world. As we all know too well, under certain conditions, any fault can give rise to an error, which is the name we give to any discrepancy between a system’s intended behavior and its actual behavior. Errors have the potential to cause a system to fail to perform its required function: a failure. It doesn’t stop there though: a failure in a subsystem or component becomes a fault in the larger system; any fault that isn’t properly contained has the potential to cascade upwards until it causes a total system failure.

In an ideal world, every system would be carefully designed to prevent faults from ever occurring, but this is an unrealistic goal. You can’t anticipate prevent every possible fault, and it’s wasteful and unproductive to try. However, by assuming that all of system’s components are certain to fail – which they are – and designing them to respond to potential faults and limit the effects of failures, you can produce a system that’s functionally healthy even when some of its components are not.

There are many ways of designing a systems for resiliency. Deploying redundant components is the perhaps the most common approach, but that also assumes that a fault won’t affect all components of the same type. Circuit breakers and retry logic can be included to prevent failures from propagating between components. Faulty components can even be reaped – or can intentionally fail – to benefit the larger system.

We’ll discuss all of these approaches (and more) in much more depth in Chapter 9.

Manageability

A system’s manageability is the ease (or lack thereof) with which its behavior can be modified to keep it secure, running smoothly, and compliant with changing requirements. A system can be considered manageable if it’s possible to sufficiently alter its behavior without having to alter its code.

As a system property, manageability gets a lot less attention than some of the more attention-grabbing attributes like scalability or observability. It’s every bit as critical, though, particularly in complex, distributed systems.

For example, imagine a hypothetical system that includes a service and a database, and that the service refers to the database by a URL. What if you needed to update that service to refer to another database? If the URL was hard-coded you might have to update the code and redeploy, which depending on the system might be awkward for its own reasons. Of course, you could update the DNS record to point to the new location, but what if you needed to redeploy a development version of the service, with its own development database?

A manageable system might, for example, represent this value as an easily-modified environment variable; if the service that uses it is deployed in Kubernetes, adjustments to its behavior might be a matter of updating a value in a ConfigMap. A more complex system might even provide a declarative API that a developer can use to tell the system what behavior she expects. There’s no single right answer9.

Manageability isn’t limited to configuration changes. It encompasses all possible dimension of a system’s behavior, be it the ability to active feature flags, or rotate credentials or SSL certificates, or even (and perhaps especially) deploy or upgrade (or downgrade) system components.

Manageability Is Not Maintainability

It can be said that manageability and maintainability have some “mission overlap”, in that they’re both concerned with the ease with which a system can be modified10, but they’re actually quite different.

-

Maintainability describes the ease with which changes can be made to a system’s underlying functionality, most often its code. It’s how easy it is to make changes from the inside.

-

Manageability describes the ease with which changes can be made to the behavior of a running system, up to and including deploying (and re-deploying) components of that system. It’s how easy it is to make changes from the outside.

Manageable systems are designed for adaptability, and can be readily adjusted to accommodate changing functional, environmental, or security requirements. Unmanageable systems, on the other hand, tend to be far more brittle, frequently requiring ad-hoc – often manual – changes. The overhead involved in managing such systems places fundamental limits on their scalability, availability, and reliability.

The concept of manageability – and some preferred practices for implementing them in Go – will be discussed in much more depth in Chapter 10.

Observability

The observability of a system is a measure of how well its internal states can be inferred from knowledge of its external outputs. A system can be considered observable when it’s possible to quickly and consistently ask novel questions about it with minimal prior knowledge and without having to re-instrument or build new code.

On its face, this might sound simple enough; just sprinkle in some logging and slap up a couple of dashboards, and your system is observable, right? Almost certainly not. Not with modern, complex systems at any rate, in which almost any problem is the manifestation of a web of multiple things going wrong simultaneously. The Age of the LAMP Stack is over; things are harder now.

This isn’t to say that metrics, logging, and tracing aren’t important. On the contrary: they represent the building blocks of observability. But their mere existence is not enough: data is not information. They need to be used the right way. They need to be rich. Together, they need to be able to answer questions that you’ve never even thought to ask before.

The ability to detect and debug problems is a fundamental requirement for the maintenance and evolution of a robust system. But in a distributed system it’s often hard enough just figuring out where a problem is. Complex systems are just too… complex. The number of possible failure states for any given system is proportional to the product of the number of possible partial and complete failure states of each of its components, and it’s impossible to predict all of them. The traditional approach of focusing attention on the things we expect to fail simply isn’t enough.

Emerging practices in observability can be seen as the evolution of monitoring. Years of experience with designing, building, and maintaining complex systems have taught us that traditional methods of instrumentation – including but not limited to dashboards, unstructured logs, or alerting on various “known unknowns” – just aren’t up to the challenges presented by modern distributed systems.

Observability is a complex and subtle subject, but fundamentally it comes down to this: instrument your systems richly enough, and under real enough scenarios, that a future you can answer questions that you haven’t thought to ask yet.

The concept of observability – and some suggestions for implementing it – will be discussed in much more depth in Chapter 11.

Why Is Cloud Native a Thing?

The move towards “cloud native” is an example of architectural and technical adaptation, driven by environmental pressure and selection. It’s evolution; survival of the fittest. Bear with me here; I’m a biologist by training.

Eons ago, in the Dawn of Time11, applications would be built and deployed (generally by hand) to one or small number of servers, where they were carefully maintained and nurtured. If they got sick, they were lovingly nursed back to health. If a service went down, you could often fix it with a restart. Observability was shelling into a server to run top and review logs. It was a simpler time.

In 1997 only 11% of people in the developed world and 2% worldwide were regular Internet users. The subsequent years saw exponential growth in Internet access and adoption, however, and by 2017 that number had exploded to 81% of the developed world and 48% worldwide12 – and continues to grow.

All of those users – and their money – stressed services and applied a significant incentive to scale. What’s more, as user sophistication and dependency on web services grew, so did expectations that their favorite web applications would be both feature-rich and always available.

The result was – and is – a significant evolutionary pressure towards scale, complexity, and dependability. These three attributes don’t play well together, though, and the traditional approaches simply couldn’t – and can’t – keep up. New techniques and practices had to be invented.

Fortunately, the introduction of public clouds and infrastructure-as-a-service (IaaS) made it relatively straight-forward to scale infrastructure out. Shortcomings with dependability could often be compensated for with sheer numbers. But that introduced new problems. How do you maintain a hundred servers? A thousand? Ten thousand? How do you install your application onto them, or upgrade it? How do you debug it when it misbehaves? How do you even know it’s healthy? What’s annoying small becomes hard at scale.

Cloud native is a thing because scale is the cause of and solution to all of our problems. It’s not magic. It’s not special. All fancy language aside, cloud native techniques and technologies exist for no other reasons than to make it possible to leverage the benefits of a “cloud” (quantity) to compensate for its downsides (lack of dependability).

-

Surden, Esther. “Privacy Laws May Usher In Defensive DP: Hopper.” Computerworld, 26 Jan. 1976, p. 9. ↩︎

-

Which is Go. Don’t get me wrong. ↩︎

-

This is still a Go book after all. ↩︎

-

Have you ever wondered why so many Kubernetes migrations fail? ↩︎

-

Especially for me. I get to write this cool book. ↩︎

-

Cloud Native Computing Foundation. “CNCF Cloud Native Definition v1.0”, GitHub, 14 Aug. 2019, http://github.com/cncf/toc/blob/master/DEFINITION.md. ↩︎

-

Those of us who remember the Browser Wars of the 1990’s will recall that this wasn’t always strictly true. ↩︎

-

Or if every web site required a different browser. That would stink, wouldn’t it? ↩︎

-

There are some wrong ones though. ↩︎

-

Plus, they both start with ‘M’. Super confusing. ↩︎

-

[That time is the 1990s. ↩︎

-

International Telecommunication Union (ITU). “Internet users per 100 inhabitants 1997 to 2007” and “Internet users per 100 inhabitants 2005 to 2017.” ICT Data and Statistics (IDS) ↩︎

Last modified on 2020-06-15